Architecting Product Owner to QA Delivery Pipelines with Claude Code

April 03, 2026

This chapter details the architectural patterns and practical implementation of automated software delivery pipelines driven by Product Owner intent and validated by AI QA, leveraging Claude Code and Azure AI. We will explore how to bridge the gap between initial product vision and robust, tested software.

Curated by Jepoy · AI-Generated Content

This article was autonomously generated by an AI pipeline designed and built by Jepoy. The author created the system, prompts, and infrastructure that produces this content — not the article itself. Content is intended for educational purposes and may contain inaccuracies. Always verify technical details before applying in production.

Bridging Intent to Assurance: The PO-to-QA Pipeline

As senior engineers and architects, we understand that effective software delivery hinges on a tight feedback loop. Traditionally, this loop involved manual handoffs from Product Owners (POs) to developers, then to QA. The advent of advanced AI agents, particularly those powered by models like Claude, offers a transformative opportunity to automate and elevate this cycle. This chapter dives into architecting and implementing a Product Owner to QA software delivery pipeline that automates the translation of intent into verifiable, high-quality code.

Our focus will be on building systems where the “Product Owner” is not just a human stakeholder, but an AI-augmented process that captures and refines requirements, which then feeds into an automated development and testing pipeline. We’ll leverage Claude Code for code generation and refinement, .NET for robust application development, and Azure AI for orchestration and advanced QA capabilities, all orchestrated through agent-based paradigms.

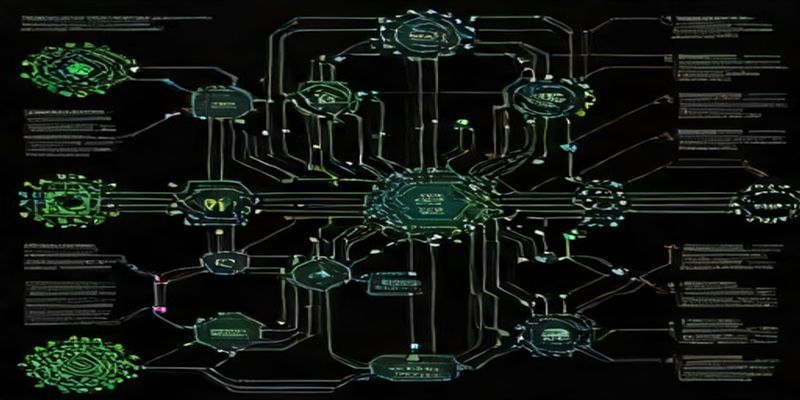

Architectural Blueprint: The Intent-Driven Delivery Framework

At the core of this pipeline lies a layered architectural approach, designed for extensibility and resilience.

- Intent Capture & Refinement Layer:

- Role: This layer is responsible for ingesting and structuring product requirements. This can start with human input (e.g., user stories, feature requests) which is then elaborated and translated into machine-readable formats by an AI agent.

- Key Components:

- Requirement Ingestion Module: Accepts various input formats (plain text, markdown, Jira/Azure DevOps tickets).

- Intent Clarification Agent (Claude Code): Interacts with the ingested requirements, asks clarifying questions (if necessary), and refines them into unambiguous, actionable specifications. This agent can also translate high-level feature descriptions into detailed functional requirements and even initial API contracts.

- Specification Repository: A version-controlled store (e.g., Git repository) for structured specifications (e.g., OpenAPI definitions, BDD feature files, JSON schemas).

- Code Generation & Orchestration Layer:

- Role: Translates refined specifications into executable code and orchestrates the development workflow.

- Key Components:

- Code Generation Agent (Claude Code): Takes refined specifications and generates boilerplate code, unit tests, API endpoints, and even UI components based on predefined templates and best practices.

- Orchestration Engine (Azure Logic Apps/Azure Functions): Manages the workflow. It triggers code generation, integrates with version control, initiates builds, and coordinates testing phases.

- Developer Sandbox Environment: An isolated environment where generated code can be tentatively integrated and tested before committing to the main branch.

- Automated Testing & Assurance Layer:

- Role: Validates the generated code against the original intent and quality standards. This is where AI plays a crucial role beyond traditional unit and integration tests.

- Key Components:

- BDD Test Generation Agent: Can generate Behavior-Driven Development (BDD) test scenarios from refined specifications.

- AI-Powered QA Agent (Azure AI Services, potentially fine-tuned Claude Code): Performs more advanced testing, such as:

- Semantic Testing: Verifying that the output of the application aligns with the meaning and intent of the requirements, not just the literal implementation.

- Edge Case Exploration: Proactively identifying and generating tests for potential edge cases that might be missed by static analysis or standard test suites.

- Security Vulnerability Scanning: Identifying common security anti-patterns.

- Performance Baseline Checks: Ensuring generated code meets basic performance expectations.

- Automated Test Execution Framework: Runs unit, integration, BDD, and AI-driven tests.

- Feedback & Iteration Layer:

- Role: Consolidates test results, identifies failures, and feeds them back into the pipeline for refinement.

- Key Components:

- Result Aggregator: Collects and analyzes test outcomes.

- Failure Analysis Agent: Utilizes AI to pinpoint root causes of failures, potentially suggesting fixes or flagging specific areas for developer review.

- Automated PR/Commit Generation: Creates pull requests or commits with suggested fixes based on failure analysis.

- Human Review Gateway: A crucial checkpoint where human POs or senior developers review AI-generated code, test results, and proposed fixes.

Practical Implementation Snippets

Let’s illustrate with concrete examples using Claude Code for intent refinement and code generation, and Azure AI for orchestration.

1. Intent Clarification and Specification Generation

Imagine a Product Owner submits a high-level request: “As a user, I want to be able to upload profile pictures to my account.”

We can use an Azure Function triggered by a webhook (e.g., from Jira) to initiate this process. The function would send the requirement to Claude Code.

Azure Function (C#) Snippet:

using Azure.Identity;

using Azure.Messaging.ServiceBus;

using Azure.AI.OpenAI; // Assuming Claude is accessible via OpenAI compatible API or a wrapper

public static class IntentClarificationFunction

{

[Function("ClarifyProductIntent")]

public static async Task Run(

[HttpTrigger(AuthorizationLevel.Function, "post")] HttpRequest req,

ILogger log)

{

string requestBody = await new StreamReader(req.Body).ReadToEndAsync();

var requirements = JsonConvert.DeserializeObject<ProductRequirement>(requestBody); // Custom PO req object

var endpoint = Environment.GetEnvironmentVariable("AZURE_OPENAI_ENDPOINT");

var deployName = Environment.GetEnvironmentVariable("CLAUDE_DEPLOYMENT_NAME"); // Claude model deployment name

var key = Environment.GetEnvironmentVariable("AZURE_OPENAI_KEY");

var client = new OpenAIClient(new Uri(endpoint), new AzureKeyCredential(key));

var prompt = $@"

Refine the following product requirement into a detailed functional specification suitable for code generation.

Include:

1. User Stories (detailed)

2. Acceptance Criteria (testable)

3. API Endpoint Definition (OpenAPI 3.0 format)

4. Data Models (JSON Schema)

Requirement: {requirements.Description}

";

try

{

var chatCompletion = await client.GetChatCompletionsAsync(

deploymentName: deployName,

messages: new List<ChatRequestMessage>

{

new ChatRequestSystemMessage("You are an expert AI assistant for refining product requirements."),

new ChatRequestUserMessage(prompt)

});

var refinedSpec = chatCompletion.Value.Choices[0].Message.Content;

// Store refinedSpec in a Git repository or Azure Blob Storage

await StoreSpecificationAsync(refinedSpec);

log.LogInformation("Product intent successfully clarified and specification generated.");

}

catch (Exception ex)

{

log.LogError($"Error clarifying intent: {ex.Message}");

// Implement error handling and retry mechanisms

}

}

private static Task StoreSpecificationAsync(string spec)

{

// Placeholder: Implement logic to push to Git or save to Azure Blob Storage

Console.WriteLine("Storing specification:\n" + spec);

return Task.CompletedTask;

}

}

public class ProductRequirement

{

public string Id { get; set; }

public string Description { get; set; }

// Other metadata

}

This function, when triggered, calls Claude Code with a carefully crafted prompt. The output will be a structured specification, e.g., a YAML file containing an OpenAPI spec and JSON schemas.

2. Code Generation from Specification

With the refinedSpec in hand, an Azure DevOps Pipeline or GitHub Actions workflow can trigger another agent (or the same Azure Function re-tasked) to generate code.

Agent Prompt for Code Generation:

Generate a .NET Core Web API controller and corresponding DTOs based on the following OpenAPI specification. Ensure the controller has endpoints for POSTing and GETting profile pictures. Include basic validation.

The output from Claude Code could be a C# file like this:

// Generated Controller (Snippet)

[ApiController]

[Route("api/[controller]")]

public class ProfilePictureController : ControllerBase

{

private readonly ILogger<ProfilePictureController> _logger;

// Assume some storage service is injected

public ProfilePictureController(ILogger<ProfilePictureController> logger)

{

_logger = logger;

}

[HttpPost("upload")]

public async Task<IActionResult> UploadProfilePicture([FromForm] ProfilePictureUploadDto model)

{

if (model.File == null || model.File.Length == 0)

{

return BadRequest("No file uploaded.");

}

if (!model.File.ContentType.StartsWith("image/"))

{

return BadRequest("Invalid file type. Only images are allowed.");

}

// Placeholder for actual storage logic

var imageUrl = await StoreImageAsync(model.File);

return Ok(new { Message = "Profile picture uploaded successfully.", Url = imageUrl });

}

private Task<string> StoreImageAsync(IFormFile file)

{

// In a real scenario, this would upload to Azure Blob Storage, S3, etc.

return Task.FromResult($"https://example.com/images/{Guid.NewGuid()}{Path.GetExtension(file.FileName)}");

}

// GET endpoint would likely involve fetching from storage and returning

}

// Generated DTO

public class ProfilePictureUploadDto

{

[Required]

[FileExtensions("jpg,jpeg,png")]

public IFormFile File { get; set; }

// Potentially other metadata like UserId if not inferred from auth

}

3. AI-Powered QA and Validation

This is where we move beyond traditional unit tests. We can use Azure AI services (like Text Analytics, or even a fine-tuned Claude instance) to analyze test results or even directly test outputs.

Scenario: A new version of the upload endpoint is deployed. We run integration tests. One test fails with an ambiguous error.

The Failure Analysis Agent could:

- Examine the generated code and the failing test.

- Query Claude Code (or another LLM) with the context: “Analyze this C# code snippet, the OpenAPI spec, and the following test failure log. Suggest a likely cause and a potential fix. [Code Snippet] [OpenAPI Spec Snippet] [Test Failure Log]”

Example AI QA Task:

Let’s say the upload endpoint returns a JSON response. An AI QA agent could be tasked with validating the semantic correctness of that response.

Prompt for Semantic QA Agent:

Given the original requirement 'As a user, I want to be able to upload profile pictures to my account.' and the generated API response:

{

"message": "Profile picture uploaded successfully.",

"url": "https://example.com/images/a1b2c3d4-e5f6-7890-1234-567890abcdef.jpg"

}

Verify if this response semantically fulfills the user's expectation. Does the 'url' field correctly represent a location for the uploaded picture? Is the 'message' informative and appropriate?

This allows us to catch subtle regressions where the code technically works but doesn’t deliver the intended user experience.

Common Pitfalls and How to Avoid Them

- Hallucinations and Inaccurate Code:

- Mitigation: Rigorous Prompt Engineering: Be extremely specific in prompts, providing context, desired output format, and constraints. Iterative Refinement: Use Claude’s conversational abilities to refine generated code or specifications. Human Review Gates: Crucial for validating AI-generated code, especially for critical components. Implement strong test coverage to catch errors early.

- Over-reliance on Automation:

- Mitigation: Maintain Human Oversight: AI is a co-pilot, not an autopilot. Complex logic, architectural decisions, and security-sensitive areas require human expertise. Focus on Repetitive Tasks: Automate tasks that are tedious, error-prone, or time-consuming for humans.

- Specification Drift:

- Mitigation: Single Source of Truth: The AI-generated specification should be the definitive source. Any deviation should be logged and reviewed. Version Control Everything: Specifications, prompts, and generated code should all be under version control.

- Integration Complexity:

- Mitigation: Modular Design: Break down the pipeline into independent, loosely coupled agents and services. Standardized Interfaces: Use well-defined APIs and data formats (e.g., OpenAPI, JSON Schema). Orchestration Tools: Leverage tools like Azure Logic Apps, Azure Functions, or workflow engines for managing inter-agent communication.

- Security Concerns:

- Mitigation: Secure API Keys and Credentials: Use Azure Key Vault. Input Validation: Always validate inputs to AI models and generated code. Prompt Injection Protection: Be aware of prompt injection vulnerabilities, especially when requirements come from untrusted sources. Treat AI models as potentially insecure endpoints.

- Cost Management:

- Mitigation: Efficient Prompting: Shorter, more targeted prompts reduce token usage. Caching: Cache common responses or generated artifacts. Optimized Model Usage: Choose the right model for the task (e.g., smaller models for simpler tasks).

- Lack of Traceability:

- Mitigation: Comprehensive Logging: Log every step, including the prompts used, the AI responses, and the actions taken. Audit Trails: Maintain clear audit trails of all automated actions.

Conclusion

Building a Product Owner to QA software delivery pipeline powered by Claude Code and Azure AI represents a significant leap in developer productivity and quality assurance. By architecting for intent capture, intelligent code generation, and AI-augmented testing, we can create systems that are not only faster but also more robust. The key lies in a well-defined architecture, meticulous prompt engineering, and a healthy dose of human oversight. This agent-driven approach transforms the development lifecycle from a series of manual handoffs into a continuous, intelligent flow from abstract intent to verified, deployed software.